FramePack: Lightweight Local AI Video Generator for Creators

VerifiedFramePack is a next-gen open-source AI video generation framework developed by Stanford University researchers. Unlike cloud-based video models that require massive GPUs or expensive subscriptions, FramePack is built to run …

Categories & Tags

About FramePack: Lightweight Local AI Video Generator for Creators

FramePack: Lightweight Local AI Video Generator for Creators on Consumer GPUs

Overview

FramePack is a next-gen open-source AI video generation framework developed by Stanford University researchers. Unlike cloud-based video models that require massive GPUs or expensive subscriptions, FramePack is built to run locally on machines with as little as 6GB of VRAM — enabling fast, realistic video generation at home, school, or in the studio.

What Makes FramePack Unique

FramePack stands out for its ability to generate 60-second high-quality AI videos on modest hardware. It uses a compressed, diffusion-based architecture with a layered frame prediction engine, making it highly efficient without sacrificing output realism. Ideal for indie creators, educators, developers, and researchers, FramePack makes AI video creation accessible — no cloud needed.

Key Features

Low-VRAM Video Diffusion Engine: Runs on GPUs with 6GB+ VRAM

Up to 60 Seconds of Footage: Generate long sequences with stable motion and coherence

Frame Layering & Compression: Achieve cinematic fluidity without heavy compute load

Prompt-to-Video Pipeline: Input natural language and generate consistent motion visuals

Reference Image Support: Upload base visuals for story, tone, or character reference

Social & Creative Applications

Great for TikTok, Reels, Shorts, YouTube videos, concept animations, educational explainers, and indie film projects

Exports in 16:9 and 9:16 formats with adjustable frame rate (24–60 FPS)

Ideal for AI filmmakers, digital artists, animation studios, students, and developers experimenting with local workflows

Who Should Use FramePack?

Hobbyists & Indie Creators: Generate test scenes, music videos, or AI experiments without cloud dependency

Students & Educators: Use for interactive media learning or visual AI coursework

Researchers: Integrate with other AI pipelines to test prompt fidelity and motion control

3D Artists & Designers: Turn concept visuals into stylized motion renders

Pros

Local video generation without cloud costs

Supports longer sequences than most diffusion models

Runs on consumer-level GPUs (NVIDIA GTX/RTX with 6GB+)

Fully open-source for experimentation and extension

Cons

Requires technical setup (Linux or Colab, Python, PyTorch)

UI not built for casual creators (command-line or code notebooks)

No built-in social publishing tools or captions

Platform & Technical Requirements

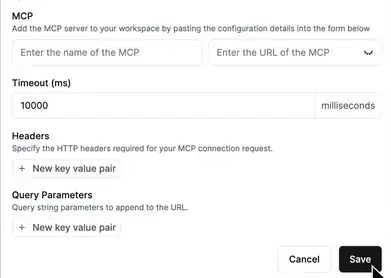

Official GitHub: https://github.com/StanfordAIMI/FramePack

Install Requirements: Python, PyTorch, FFmpeg, 6GB+ NVIDIA GPU

Runtime: Local Linux system or Colab (limited RAM required)

Export Format: MP4, WebM

How to Use FramePack

Clone the repo from FramePack on GitHub

Set up dependencies on your local machine or open in Colab

Write a text prompt or reference image as input

Run the frame generation script and wait for processing

Download and refine output for Shorts, Reels, or your app

💡 Watch a hands-on demo: FramePack Demo on YouTube

Frequently Asked Questions

Q: Can FramePack generate videos for TikTok or YouTube?

A: Yes — it exports videos that can be formatted into 9:16 or 16:9 ratios ideal for Reels, Shorts, or long-form use.

Q: Does it work on Windows or Mac?

A: FramePack is currently optimized for Linux systems and Colab. Windows setup may require WSL; Mac support is limited.

Q: Do I need a powerful GPU?

A: No — you can run FramePack with as little as 6GB VRAM, though higher VRAM speeds up generation.

Q: Is FramePack free to use?

A: Yes. It’s fully open-source and available for research, education, and creative use.

Why FramePack is a Breakthrough for Local AI Video Creation

FramePack democratizes AI video creation by removing cloud barriers. Whether you're a student experimenting, a filmmaker prototyping, or an AI enthusiast building something new, this tool lets you generate extended video content on your own device — for free.

Pro Tips for FramePack Users

Use reference images to guide style and scene fidelity

Keep prompts concise but visual (“a glowing drone flies over neon Tokyo at night”)

Batch generate and trim clips in CapCut or Premiere Pro

Test frame rates (24 FPS for cinematic, 60 FPS for dynamic reels)

Use Colab if your device doesn’t meet hardware requirements

Powered by Froala Editor

Key Features

Feature One

Description of key feature one for FramePack: Lightweight Local AI Video Generator for Creators.

Feature Two

Description of key feature two for FramePack: Lightweight Local AI Video Generator for Creators.

Use Cases

For Marketers: Speed up content creation for ads, social media, and blogs.

For Developers: Integrate AI capabilities into your applications.

For Students: Assist with research, writing, and learning new concepts.

Pros & Cons

Pros

- Pro point 1 for FramePack: Lightweight Local AI Video Generator for Creators.

- Pro point 2.

Cons

- Con point 1 for FramePack: Lightweight Local AI Video Generator for Creators.

- Con point 2.

FramePack: Lightweight Local AI Video Generator for Creators

AI Video Tools Automation

Pricing Plans

Free

Basic features included

You Might Also Like

App For cHECKING

AI Education Tools

QuillBot Suite Review – Paraphraser, Co‑Writer & AI Translat

AI Copywriting Tools

Notion AI Review – Your All‑in‑One Workspace Writer & Idea

AI Copywriting Tools

GrammarlyGO Review – AI Writing Assistant With Context‑Aware

AI Copywriting Tools

Anyword Review – Data‑Driven AI Editor & Predictive Copy Sco

AI Copywriting Tools